05-17 09:12

| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | |||

| 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| 12 | 13 | 14 | 15 | 16 | 17 | 18 |

| 19 | 20 | 21 | 22 | 23 | 24 | 25 |

| 26 | 27 | 28 | 29 | 30 | 31 |

Tags

- Polymolphism

- Entity Set

- 리눅스

- Class

- preprocessing

- Binary Search

- OOP

- Entity

- python

- X.org

- Mac

- X윈도우

- Java

- Physical Scheme

- 자바

- BFS

- Operator

- spring

- literal

- 백준

- systemd

- selenium

- Reference Type

- Inheritance

- External Scheme

- dbms

- Unity

- descriptive statistics

- 리눅스 마스터 1급

- 셀레니움

Archives

- Today

- Total

Byeol Lo

K-means 알고리즘 구현 본문

unsupervised learning 중에 k-means 알고리즘을 읽고 구현해보았다.

1차 시도

import numpy as np

import matplotlib.pyplot as plt

# 데이터들

data = np.array([

[-1, 0],

[0, 0],

[2, 2],

[3, 2],

[3, 3],

[-1, -1]

], dtype=np.float32)

# initial center

center0 = data[0]

center1 = data[1]

iter = 3

for _ in range(1, iter +1) :

res = np.stack(

(np.sum((data - center0)**2, axis=1),

np.sum((data - center1)**2, axis=1)),

axis=0

)

argmin_list = np.argmin(res, axis=0)

center0 = np.mean(data[argmin_list==[0]], axis=0)

center1 = np.mean(data[argmin_list==[1]], axis=0)

cluster0 = data[argmin_list==[0]]

cluster1 = data[argmin_list==[1]]

plt.scatter(center0[0].reshape(-1,1), center0[1].reshape(-1,1), color="#00ffff")

plt.scatter(center1[0].reshape(-1,1), center1[1].reshape(-1,1), color="#0000ff")

plt.scatter(cluster0[:, 0], cluster0[:,1], color="#ff0000")

plt.scatter(cluster1[:, 0], cluster1[:,1], color="#ffff00")

plt.show()

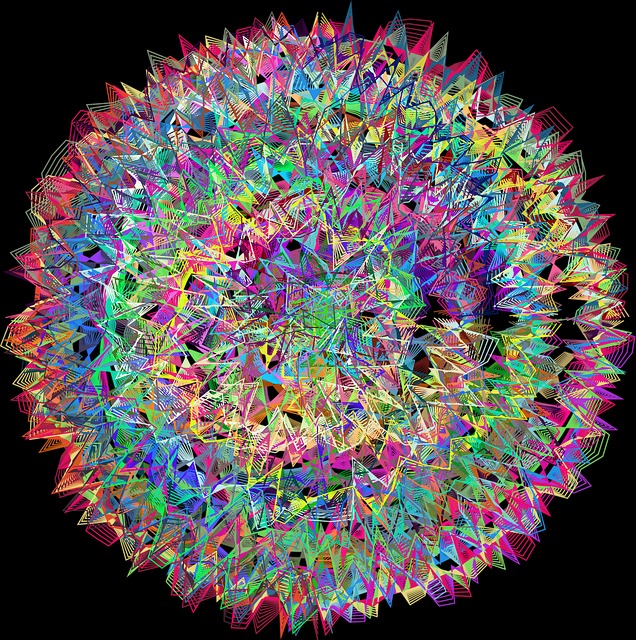

2차 시도

import numpy as np

import matplotlib.pyplot as plt

# creating data

def random_3d_data(r, g, b, n=100, diff=5) :

res = np.concatenate(

(np.random.randint(r-diff, r+diff+1, (n, 1)),

np.random.randint(g-diff, g+diff+1, (n, 1)),

np.random.randint(b-diff, b+diff+1, (n, 1))),

axis=1

)

return res

# calculate distance

def distance(pos1, pos2, l_norm=2) :

return np.sum(np.abs((pos1-pos2) ** l_norm), axis=1)

train_data = np.concatenate(

(

random_3d_data(5, 10, 10, diff=20),

random_3d_data(20, 3, 3, diff=10),

random_3d_data(0, 0, 0, diff=15)

),

axis = 0

)

test_data = random_3d_data(10, 10, 10, diff=15)

iters = 5

k_cluster = 10

# initial center point

center = np.array(

train_data[np.random.randint(0, train_data.shape[0], (k_cluster,))],

dtype = np.float32

)

for _ in range(iters) :

# calculate argmax index

idx = np.argmin([distance(train_data, i) for i in center], axis=0)

# updating centeral points

for i in range(k_cluster) : center[i] = np.mean(train_data[idx == i], axis = 0)

idx = np.argmin([distance(test_data, i) for i in center], axis=0)

X = test_data[:, 0]

Y = test_data[:, 1]

Z = test_data[:, 2]

fig, ax = plt.subplots(

figsize=(10,10),

subplot_kw={'projection':'3d'}

)

ax.scatter(X, Y, Z, c=idx, cmap="inferno")

ax.scatter(center[:, 0], center[:, 1], center[:, 2],

marker="x", color="blue")

plt.show()

'AI' 카테고리의 다른 글

| 밑바닥부터 시작하는 딥러닝1 - 5. 합성곱 신경망 CNN (0) | 2023.06.15 |

|---|---|

| 밑바닥부터 시작하는 딥러닝1 - 4. 학습 기법 (2) | 2023.06.14 |

| 밑바닥부터 시작하는 딥러닝1 - 3. 오차역전파 (0) | 2023.06.14 |

| 밑바닥부터 시작하는 딥러닝1 - 2. 처리과정에서의 용어들 (0) | 2023.06.07 |

| 밑바닥부터 시작하는 딥러닝1 - 1. 퍼셉트론 (0) | 2023.06.07 |

Comments